Machine learning developers gained new abilities to develop and run their ML programs on the framework and hardware of their choice thanks to the OpenXLA Project, which today announced the availability of key open source components.

Data scientists and ML engineers often spend a lot of time optimizing their models to work on each hardware target. Whether they’re working in a framework like TensorFlow or PyTorch and targeting GPUs or TPUs, there was no way to avoid this manual effort, which consumed precious time and made it difficult to move applications at a later date.

This is the general problem targeted by the folks behind the OpenXLA Project, which was founded last fall and today includes Alibaba, Amazon Web Services, AMD, Apple, Arm, Cerebra Systems, Google, Graphcore, Hugging Face, Intel, Meta, and NVIDIA as its members.

By creating a unified machine learning compiler that works with a range of ML development frameworks and hardware platforms and runtimes, OpenXLA can accelerate the delivery of ML applications and provide greater code portability.

Today, the group announced the availability of three open source tools as part of the project. XLA is an ML compiler for CPUs, GPUs, and accelerators; StableHLO is an operation set for high-level operations (HLO) in ML that provides portability between frameworks and compilers; while IREE (Intermediate Representation Execution Environment) is an end-to-end MLIR (Multi-Level Intermediate Representation) compiler and runtime for mobile and edge deployments. All three are available for download from the OpenXLA GitHub site

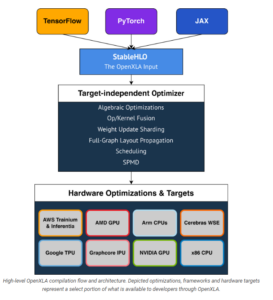

Initial frameworks supported by OpenXLA including TensorFlow, PyTorch, and JAX, a new Google framework JAX is designed for transforming numerical functions, and is described as bringing together a modified version of autograd and TensorFlow while following the structure and workflow of NumPy. Initial hardware targets and optimizations include Intel CPU, Nvidia GPUs, Google TPUs, AMD GPU, Arm CPUs, AWS Trainium and Inferentia, Graphcore’s IPU, and Cerebras Wafer-Scale Engine (WSE). OpenXLA’s “target-independent optimizer” targets albebraic functions, op/kernel fusion, weight update sharding, full-graph layout propagation, scheduling, and SPMD for parallelism.

The OpenXLA compiler products can be used with a variety of ML use cases, including full-scale training of massive deep learning models, including large language models (LLMs) and even generative computer vision models like Stable Diffusion. It can also be used for inference; Waymo already uses OpenXLA for real-time inferencing on its self-driving cars, according to a post today on the Google open source blog.

The OpenXLA compiler ecosystem provides portability between ML development tools and hardware targets (Image source OpenXLA Project)

OpenXLA members touted some of their early successes with the new compiler. Alibaba, for instance, says it was able to train a GPT2 model on Nvidia GPUs 72% faster using OpenXLA, and saw an 88% speedup for a Swin Transformer training task on GPUs.

Hugging Face, meanwhile, said it saw about a 100% speedup when it paired XLA with its text generation model written in TensorFlow. “OpenXLA promises standardized building blocks upon which we can build much needed interoperability, and we can’t wait to follow and contribute!” said Morgan Funtowicz, head of machine learning optimization for the Brooklyn, New York, company.

Facebook was able to “achieve significant performance improvements on important projects,” including using XLA on PyTorch models running on Cloud TPUs, said Soumith Chintala, the lead maintainer for PyTorch.

The chip startups are pleased for XLA, which reduces the risks of adopting relatively new, unproven hardware for customers. “Our IPU compiler pipeline has used XLA since it was made public,” said David Norman, Graphcore’s director of software design. “Thanks to XLA’s platform independence and stability, it provides an ideal frontend for bringing up novel silicon.”

“OpenXLA helps extend our user reach and accelerated time to solution by providing the Cerebras Wafer-Scale Engine with a common interface to higher level ML frameworks,” says Andy Hock, a vice president and head of product at Cerebras. “We are tremendously excited to see the OpenXLA ecosystem available for even broader community engagement, contribution, and use on GitHub.”

AMD and Arm, which are battling bigger chipmakers for pieces of the ML training and serving pies, are also happy members of the OpenXLA Project.

“We value projects with open governance, flexible and broad applicability, cutting edge features and top-notch performance and are looking forward to the continued collaboration to expand open source ecosystem for ML developers,” Alan Lee, AMD’s corporate vice president of software development, said in the blog.

“The OpenXLA Project marks an important milestone on the path to simplifying ML software development,” said Peter Greenhalgh, vice president of technology and fellow at Arm. “We are fully supportive of the OpenXLA mission and look forward to leveraging the OpenXLA stability and standardization across the Arm Neoverse hardware and software roadmaps.”

Curiously absent are IBM, which continues to innovate on chips with its Power10 processor, and Microsoft, the world’s second largest provider behind AWS.

Related Items:

Google Announces Open Source ML Compiler Project, OpenXLA

AMD Joins New PyTorch Foundation as Founding Member

Inside Intel’s nGraph, a Universal Deep Learning Compiler

March 26, 2025

- Quest Adds GenAI to Toad to Bridge the Skills Gap in Modern Database Management

- SymphonyAI Expands Industrial AI to the Edge with Microsoft Azure IoT Operations

- New Relic Report Reveals Media and Entertainment Sector Looks to Observability to Drive Adoption of AI

- Databricks and Anthropic Sign Deal to Bring Claude Models to Data Intelligence Platform

- Red Hat Boosts Enterprise AI Across the Hybrid Cloud with Red Hat AI

March 25, 2025

- Cognizant Advances Industry AI with NVIDIA-Powered Agents, Digital Twins, and LLMs

- Grafana Labs Unveils 2025 Observability Survey Findings and Open Source Updates at KubeCon Europe

- Algolia Boosts Browse with AI-Powered Collections

- AWS Expands Amazon Q in QuickSight with New AI Scenarios Capability

- Komprise Automates Complex Unstructured Data Migrations

- PEAK:AIO Chosen by Scan to Support Next-Gen GPUaaS Platform

- Snowflake Ventures Deepens Investment in DataOps.live to Advance Data Engineering Automation

- KX Emerges as Standalone Software Company to Make Temporal AI a Commercial Reality

- PAC Storage Unveils 5000 Series Data Storage Solutions

March 24, 2025

- Tessell Introduces Fully Managed Database Service on Google Cloud

- Datavault AI Joins IBM Partner Plus to Transform AI-Driven Data Monetization

- Cerabyte Unveils Immutable Data Storage for Government Customers

- Provenir Highlights AI-Driven Risk Decisioning in Datos Insights Report

- Algolia Showcases Powerful AI-Driven Search at ShopTalk Spring 2025

- StarTree Awarded 2025 Confluent Data Flow ISV Partner of the Year – APAC

- PayPal Feeds the DL Beast with Huge Vault of Fraud Data

- OpenTelemetry Is Too Complicated, VictoriaMetrics Says

- When Will Large Vision Models Have Their ChatGPT Moment?

- The Future of AI Agents is Event-Driven

- Accelerating Agentic AI Productivity with Enterprise Frameworks

- Your Next Big Job in Tech: AI Engineer

- Data Warehousing for the (AI) Win

- Nvidia Touts Next Generation GPU Superchip and New Photonic Switches

- Krishna Subramanian, Komprise Co-Founder, Stops By the Big Data Debrief

- Alation Aims to Automate Data Management Drudgery with AI

- More Features…

- IBM to Buy DataStax for Database, GenAI Capabilities

- Clickhouse Acquires HyperDX To Advance Open-Source Observability

- NVIDIA GTC 2025: What to Expect From the Ultimate AI Event?

- Excessive Cloud Spending In the Spotlight

- EDB Says It Tops Oracle, Other Databases in Benchmarks

- Databricks Unveils LakeFlow: A Unified and Intelligent Tool for Data Engineering

- Google Launches Data Science Agent for Colab

- Meet MATA, an AI Research Assistant for Scientific Data

- CDOAs Are Struggling To Measure Data, Analytics, And AI Impact: Gartner Report

- Big Data Heads to the Moon

- More News In Brief…

- Gartner Predicts 40% of Generative AI Solutions Will Be Multimodal By 2027

- Snowflake Ventures Invests in Anomalo for Advanced Data Quality Monitoring in the AI Data Cloud

- NVIDIA Unveils AI Data Platform for Accelerated AI Query Workloads in Enterprise Storage

- Accenture Invests in OPAQUE to Advance Confidential AI and Data Solutions

- Qlik Study: 94% of Businesses Boost AI Investment, But Only 21% Have Fully Operationalized It

- Seagate Unveils IronWolf Pro 24TB Hard Drive for SMBs and Enterprises

- Gartner Identifies Top Trends in Data and Analytics for 2025

- Qlik Survey Finds AI at Risk as Poor Data Quality Undermines Investments

- Palantir and Databricks Announce Strategic Product Partnership to Deliver Secure and Efficient AI to Customers

- Cisco Expands Partnership with NVIDIA to Accelerate Enterprise AI Adoption

- More This Just In…