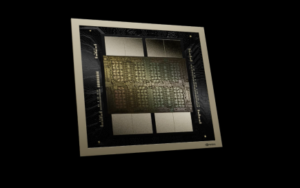

Nvidia Introduces New Blackwell GPU for Trillion-Parameter AI Models

Nvidia’s latest and fastest GPU, code-named Blackwell, is here and will underpin the company’s AI plans this year. The chip offers performance improvements from its predecessors, including the red-hot H100 and A100 GPUs. Customers demand more AI performance, and the GPUs are primed to succeed with pent up demand for higher performing GPUs.

The GPU can train 1 trillion parameter models, said Ian Buck, vice president of high-performance and hyperscale computing at Nvidia, in a press briefing.

Systems with up to 576 Blackwell GPUs can be paired up to train multi-trillion parameter models.

The GPU has 208 billion transistors and was made using TSMC’s 4-nanometer process. That is about 2.5 times more transistors than the predecessor H100 GPU, which is the first clue to significant performance improvements.

AI is a memory-intensive process, and data needs to be temporarily stored in RAM. The GPU has 192GB of HBM3E memory, the same as last year’s H200 GPU.

Nvidia is focusing on scaling the number of Blackwell GPUs to take on larger AI jobs. “This will expand AI data center scale beyond 100,000 GPU,” Buck said.

The GPU provides “20 petaflops of AI performance on a single GPU,” Buck said.

Buck provided fuzzy performance numbers designed to impress, and real-world performance numbers were unavailable. However, it is likely that Nvidia used FP4 – a new data type with Blackwell – to measure performance and reach the 20-petaflop performance number.

The predecessor H100 provided 4 teraflops of performance for the FP8 data type and about 2 petaflops of performance for FP16.

“It delivers four times the training performance of Hopper, 30 times the inference performance overall, and 25 times better energy efficiency,” Buck said.

The FP4 data type is for inferencing and will allow for the fastest computing of smaller packages of data and deliver the results back much faster. The result? Faster AI performance but less precision. FP64 and FP32 provide more precision computing but are not designed for AI.

The GPU consists of two dies packaged together. They communicate via an interface called NV-HBI, which transfers information at 10 terabytes per second. Blackwell’s 192GB of HBM3E memory is supported by 8 TB/sec of memory bandwidth.

The Systems

Nvidia has also created systems with Blackwell GPUs and Grace CPUs. First, It created the GB200 superchip, which pairs two Blackwell GPUs to its Grace CPU. Second, the company created a full rack system called the GB200 NVL72 system with liquid cooling—it has 36 GB200 Superchips and 72 GPUs interconnected in a grid format.

The GB200 NVL72 system delivers 720 petaflops of training performance and 1.4 exaflops of inferencing performance. It can support 27-trillion parameter model sizes. The GPUs are interconnected via a new NVLink interconnect, which has a bandwidth of 1.8TB/s.

The GB200 NVL72 will be coming this year to cloud providers that include Google Cloud and Oracle cloud. It will also be available via Microsoft’s Azure and AWS.

Nvidia is building an AI supercomputer with AWS called Project Ceiba, which can deliver 400 exaflops of AI performance.

“We’ve now upgraded it to be Grace-Blackwell, supporting….20,000 GPUs and will now deliver over 400 exaflops of AI,” Buck said, adding that the system will be live later this year.

Nvidia also announced an AI supercomputer called DGX SuperPOD, which has eight GB200 systems — or 576 GPUs — which can deliver 11.5 exaflops of FP4 AI performance. The GB200 systems can be connected via the NVLink interconnect, which can sustain high speeds over a short distance.

Furthermore, the DGX SuperPOD can link up tens of thousands of GPUs with the Nvidia Quantum InfiniBand networking stack. This networking bandwidth is 1,800 gigabytes per second.

Nvidia also introduced another system called DGX B200, which includes Intel’s 5th Gen Xeon chips called Emerald Rapids. The system pairs eight B200 GPUs with two Emerald Rapids chips. It can also be designed into x86-based SuperPod systems. The systems can provide up to 144 petaflops of AI performance and include 1.4TB of GPU memory and 64TB/s of memory bandwidth.

The DGX systems will be available later this year.

Predictive Maintenance

The Blackwell GPUs and DGX systems have predictive maintenance features to remain in top shape, said Charlie Boyle, vice president of DGX systems at Nvidia, in an interview with HPCwire.

“We’re monitoring 1000s of points of data every second to see how the job can get optimally done,” Boyle said.

The predictive maintenance features are similar to RAS (reliability, availability, and serviceability) features in servers. It is a combination of hardware and software RAS features in the systems and GPUs.

“There are specific new … features in the chip to help us predict things that are going on. This feature isn’t looking at the trail of data coming off of all those GPUs,” Boyle said.

Nvidia is also implementing AI features for predictive maintenance.

“We have a predictive maintenance AI that we run at the cluster level so we see which nodes are healthy, which nodes aren’t,” Boyle said.

If the job dies, the feature helps minimize restart time. “On a very large job that used to take minutes, potentially hours, we’re trying to get that down to seconds,” Boyle said.

Software Updates

Nvidia also announced AI Enterprise 5.0, which is the overarching software platform that harnesses the speed and performance of the Blackwell GPUs.

As Datanami previously reported, the NIM software includes new tools for developers, including a co-pilot to make the software easier to use. Nvidia is trying to direct developers to write applications in CUDA, the company’s proprietary development platform.

The software costs $4,500 per GPU per year or $1 per GPU per hour.

A feature called NVIDIA NIM is a runtime that can automate the deployment of AI models. The goal is to make it faster and easier to run AI in organizations.

“Just let Nvidia do the work to produce these models for them in the most efficient enterprise-grade manner so that they can do the rest of their work,” said Manuvir Das, vice president for enterprise computing at Nvidia, during the press briefing.

NIM is more like a copilot for developers, helping them with coding, finding features, and using other tools to deploy AI more easily. It is one of the many new microservices that the company has added to the software package.